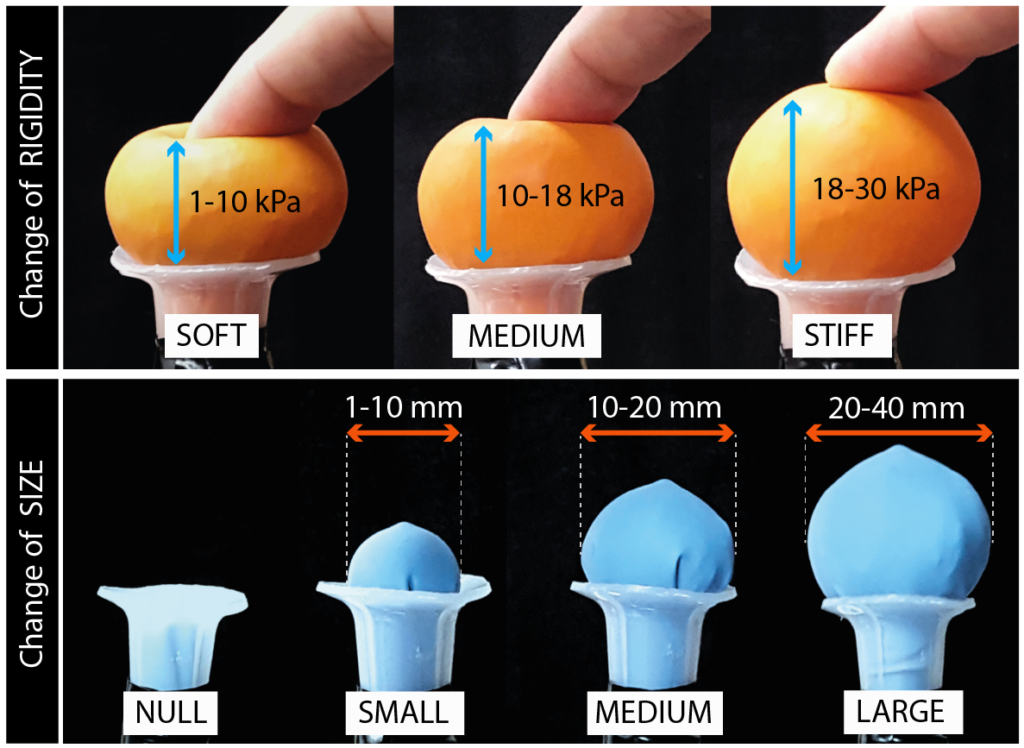

Volflex++ is haptic shape-changing interface, capable of altering its size and rigidity simultaneously for presenting characteristics of virtual objects physically. The haptic interface is composed of an array of computer-controlled balloons, with two mechanisms, one for changing size and one for changing rigidity

Currently, shape-changing interfaces do not hold a defined position in the current VR / AR research.

We provide basic knowledge for developing novel types of haptic interfaces that can enhance the haptic perception of virtual objects, allowing rich embodied interactions, and synchronize the virtual and the physical world through computationally-controlled materiality.

The results of this study have been presented at the IEEE VR Conference 2019 in Osaka (JP).

PHSYCOPHYSICAL EXPERIMENT: Haptic Perception of Size and Rigidity

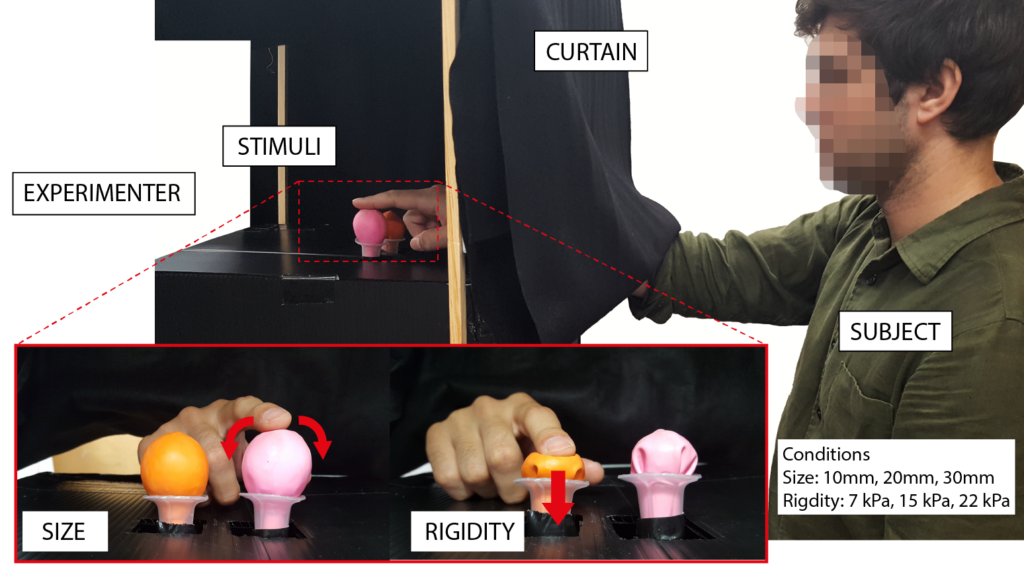

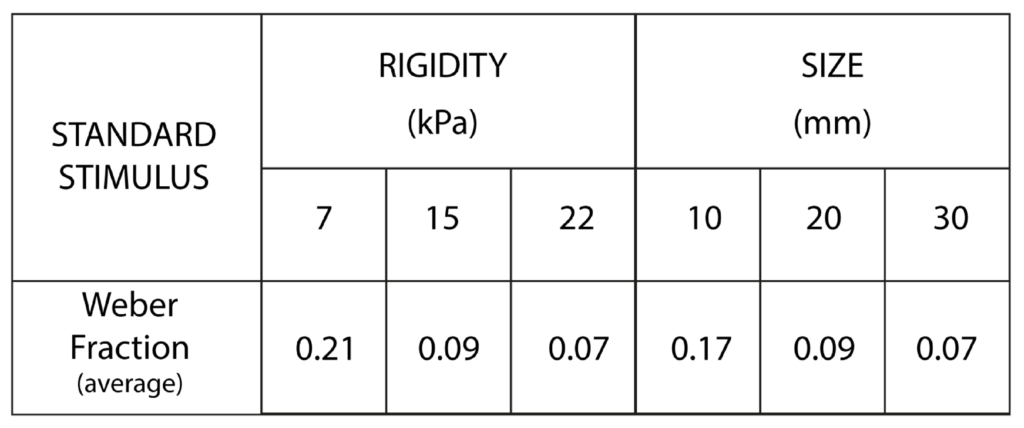

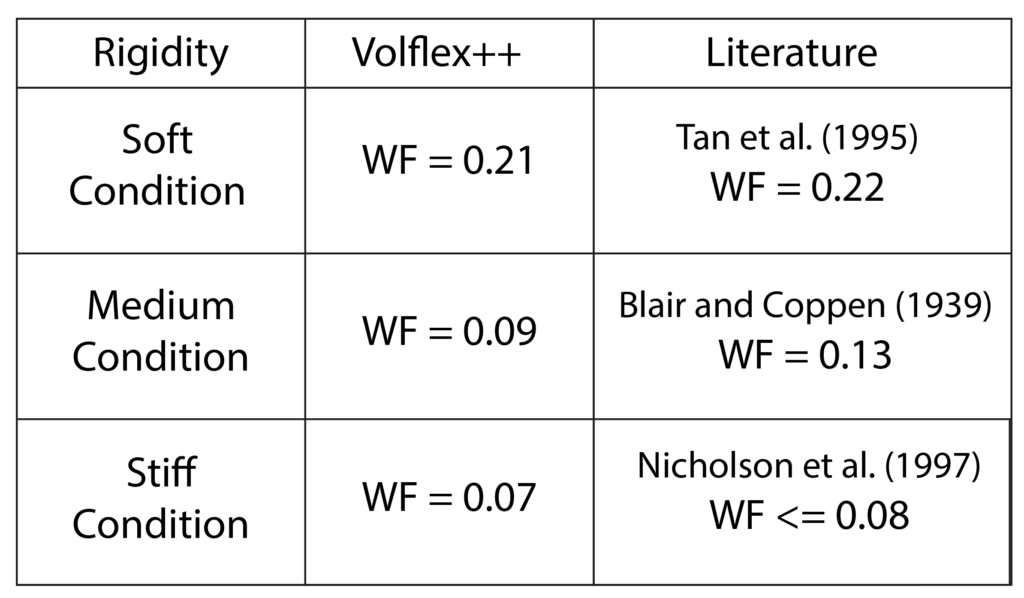

We conducted a study using psychophysics to measure the human tactile perception of these changes. We provide three psychophysical metrics, such as PSE, JND, and WF.

The experiment consisted of two sessions, one dedicated to studying the human haptic perception of changes of rigidity, and a second for the changes of size.

Our results can contribute to the understanding of the perception of variable physical cues oriented to the design of shape-changing interfaces for AR and VR.

RESULTS

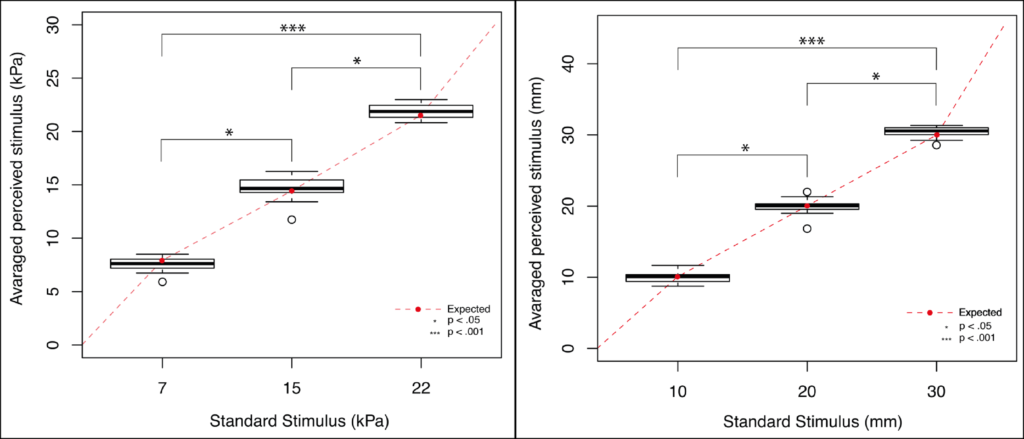

Point of Subjective Equality (PSE)

Results of ANOVA: three rigidites / size can be correctly discriminated

The third condition (stiff and large) is the most uniform

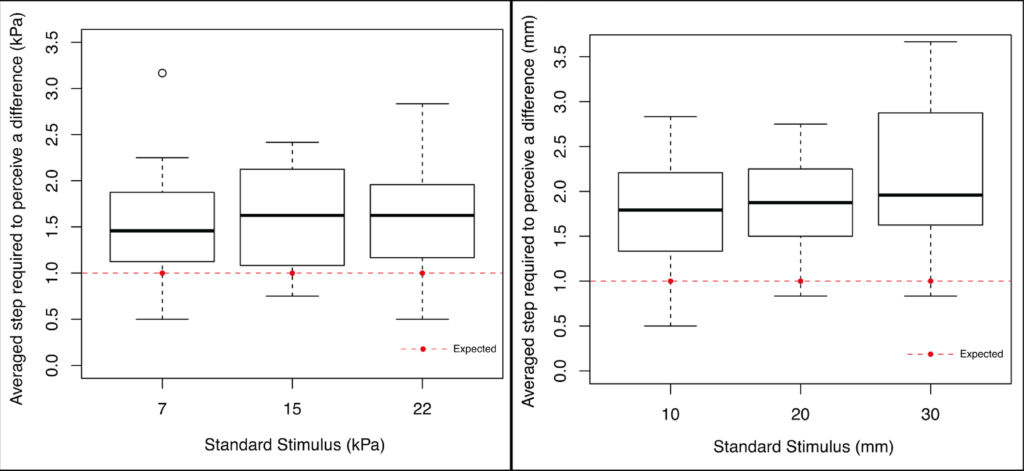

Just-Noticeable Difference (JND)

- For Rigidity:

– Complex interplay of sensory informations [1].

– Force-feedback can influence perception, resulting in large JND [2].

– Visual stimuli can enhance perception [3].

- Size

– JND increases with the increasing of the reference stimuli [4].

Weber Fraction

For Rigidity:

For Size:

The WF is larger for small stimuli and small for larger stimuli [8].

Spherical objects seem to not follow this pattern [9].

We used contour-following while [9] used hand’s enclosure, which provides only gross information.

[1] Di Luca (2014). Multisensory Softness: Perceived Compliance from Multiple Sources of Information. Springer.

[2] Tan et al. (1993). Manual Resolution of Compliance when Work and Force Cues are Minimized. Advances in Robotics, Mechatronics and Haptic Interfaces, DSC-Vol.49, pp. 99-104.

[3] Galary et al. (2017). AR feels softer than VR: Haptic perception of stiffness in augmented versus virtual reality. IEEE Trans Vis Comput Graph, pp. 2372-2377.

[4] Durlach et al. (1989). Manual discrimination and identification of length by the fingerspan method. Perception & Psychophysics, pp 29–38.

[5] Tan et al. (1995). Manual discrimination of compliance using active pinch grasp: The roles of force and work cues. Perception & Psychophysics, vol. 57, no. 4, pp. 495–510, Jun 1995.

[6] Blair, Coppen (1939). The subjective judgements of the elastic and plastic properties of soft bodies; the “differential thresholds” for viscosities and compression moduli. Proc. R Soc Lond Ser B Biol Sci 128(850), pp. 109–125.

[7] Nicholson et al. (1997). Reliability of a discrimination measure for judgements of non-biological stiffness. Manual Ther 2(3), pp. 150–156.

[8] Gaydos (1958). Sensitivity in the judgment of size by finger-span. The American Journal of Psychology, vol. 71, no. 3, pp. 557–562.

[9] Kahrimanovic et al. (2011). Discrimination thresholds for haptic perception of volume, surface area, and weight. Attention, Perception, & Psychophysics, vol. 73, no. 8, pp. 2649–2656.

CONCLUSION

- Volflex++ has the potential to present a wide variety of sizes and rigidities that are distinguishable by touch.

- Such cues might be perceived by human touch similarly to physical materials with similar characteristics.

- It can be used to represent virtual objects physically, with dynamic size and rigidity.

PUBLICATIONS

Alberto Boem, Yuuki Enzaki, Hiroaki Yano, Hiroo Iwata. 2019. Human Perception of a Haptic Shape-changing Interface with Variable Rigidity and Size. In Proceedings of the IEEE Conference on Virtual Reality and 3D User Interfaces, Osaka (JP), 2019. DOI:10.1109/VR.2019.8798214 [pdf]